""An intelligent system is a system that, given its time horizon, moves towards actions that expand its future action space."

This is a definition I keep coming back to. I want to think through it here.

What is an action space?

At any moment, a system sits in some state. From that state, it can take a finite set of actions. That set is its action space: the totality of things it can actually do, not in theory, but right now, given what it has, what it knows, and where it is.

The size and shape of that space differs enormously between systems and between moments. A person with savings, skills, strong relationships, and good health has a vast action space. A person who is broke, unwell, isolated, and unskilled has a narrow one. The world may feel the same to both of them on a given Tuesday. The difference is in what they can actually do next.

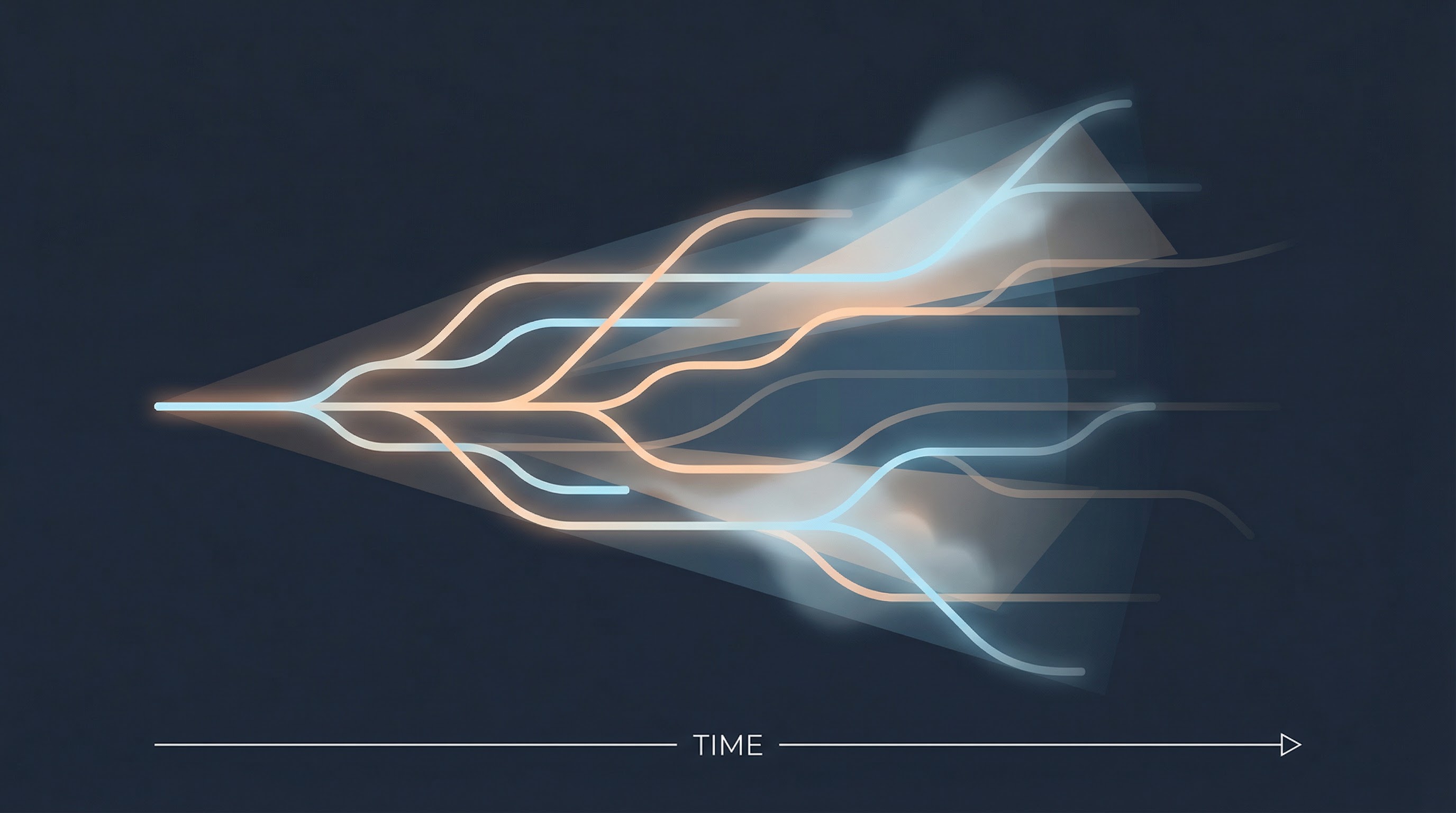

Actions themselves transform the action space. Some expand it: learning a language, saving money, building trust with someone, staying healthy. Others contract it: accumulating debt, burning a relationship, becoming dependent on a single income stream, neglecting your body. And most actions are somewhere in between, shifting the shape of the space in ways that are not obvious until later.

Why time horizon changes everything

The definition I started with includes a qualifier that matters a great deal: "given its time horizon." A system is not simply intelligent or unintelligent. Its apparent intelligence is always relative to the window of time over which we measure it.

Consider addiction. In the short term, it is a highly effective strategy for expanding one dimension of action space — specifically the experience of relief, pleasure, or escape. The system (a person) moves toward it because, within its current time horizon, it works. But over a longer window, the same behaviour contracts the action space dramatically: health deteriorates, relationships narrow, options close. What looked like intelligence at a one-hour horizon looks like its opposite at a ten-year horizon.

The same analysis applies to organisations. A company that cuts its research budget to hit a quarterly earnings target is behaving intelligently within a short time horizon. The same decision, evaluated over a decade, often looks like the beginning of decline. The action space was contracted to satisfy a near-term signal.

Intelligence, on this account, is not just about choosing well. It is about choosing well over the right window of time. Extend the horizon, and many things that look smart reveal themselves as short-sighted. Shorten it, and many things that look cautious or slow reveal themselves as quietly powerful.

"Intelligence is not just about choosing well. It is about choosing well over the right window of time.

This is actually studied formally in AI

The idea is not just a philosophical intuition. It has a formal counterpart in reinforcement learning and information theory called empowerment, developed by researchers including Klyubin, Polani, and Nehaniv in the mid-2000s.

Empowerment, in this technical sense, measures the maximum amount of information an agent can inject into its future states through its actions. A high-empowerment state is one from which many distinct futures are reachable. A low-empowerment state is one where actions do not much change what comes next. The empowerment-maximising agent is not trying to reach any particular goal. It is trying to stay in positions from which goals remain possible.

There is something elegant about this. It suggests that a sufficiently general form of intelligence does not require a fixed objective. It requires only a preference for keeping options open, and a time horizon long enough to take that preference seriously. Stuart Armstrong at the Future of Humanity Institute has argued something adjacent: that almost any sufficiently capable AI system, regardless of its specific goal, will develop a sub-goal of self-preservation and resource acquisition, because these expand the action space available for pursuing its primary objective. Intelligence converges on optionality.

The counterintuitive case: when narrowing is the intelligent move

There is an obvious objection. Sometimes the intelligent move is to deliberately constrain your action space. Commitment devices are a real phenomenon: burning your boats, signing a contract, making a public promise. By reducing your future options, you increase the likelihood of the one outcome you actually want.

Deep specialisation works the same way. The person who becomes genuinely world-class at one thing has sacrificed many adjacent paths to get there. They have, in one sense, contracted their action space. But in another sense, the depth of their expertise opens up an entirely different category of actions that generalists can never reach.

I think the resolution is that these apparent exceptions are actually consistent with the definition, provided you are careful about how you measure the space. Burning your boats does not expand the dimension of "can I retreat?" It expands the dimension of "can I succeed in this specific endeavour?" — whether by forcing focus, signalling commitment to others, or removing the cognitive load of keeping the exit open. The total action space, properly dimensioned, often grows.

What the definition rules out is something more specific: actions that contract the space without compensating expansion elsewhere, chosen without awareness of the trade-off, within a time horizon too short to see the cost.

"The question is never simply whether an action narrows or opens options. It is whether the contraction is chosen deliberately, over a long enough horizon, with clear eyes.

Learning as the clearest case

Learning is the canonical example of action space expansion, which is why I think about this definition often in the context of building Luna.

Every piece of knowledge that is genuinely retained — not just encountered but actually integrated — adds branches to your future decision tree. You can now see problems you could not see before. You can take actions you could not take before. You can connect ideas across domains in ways that were previously unavailable to you. The action space grows.

But here is the implication that I find most interesting: if most of what we passively consume is forgotten within days (and Rasmus has written about the neuroscience of exactly this), then most of our content consumption is not actually expanding our action space. It is producing the sensation of expansion without the substance. We feel more informed. We feel like we learned something. The action space does not change.

TikTok is the extreme version of this: a feed engineered to produce the maximum feeling of stimulation and novelty while delivering the minimum that actually sticks. As Emilie wrote about the attention economy, the platform doesn't care whether what it shows you expands your world. It cares whether you keep scrolling.

This is not a small gap. It is the difference between an investment and a cost that feels like an investment. And most people, most of the time, are paying the cost.

Why I think about this for products too

When I think about what Luna is trying to do at its core, this definition is close to the right framing. We are trying to build a system that helps people actually expand their action space through what they listen to, rather than simply producing the feeling that they have.

That sounds modest when stated plainly. It is not. It requires fighting against the defaults of passive consumption, against the forgetting curve, against the design of platforms that reward time spent over anything actually transferred. It requires building friction back into a process that has been engineered to be frictionless, because the right kind of friction is what makes encoding real.

I also think the definition applies to products themselves. A product that locks users in through switching costs, that makes data hard to export, that exploits habit rather than earning it, is one that contracts the user's action space in exchange for short-term retention. That might look intelligent at a one-quarter horizon. It looks very different over five years.

The products I respect most are the ones that, by design, leave people more capable than they found them. Their users have a larger action space after using the product than before. That is a hard standard to meet. It is the one worth aiming for.

About the author

Christian Løken

CEO · Luna